‘We’re victims of our own digital success’ – Fraudsters tricking us into believing they are someone they are not

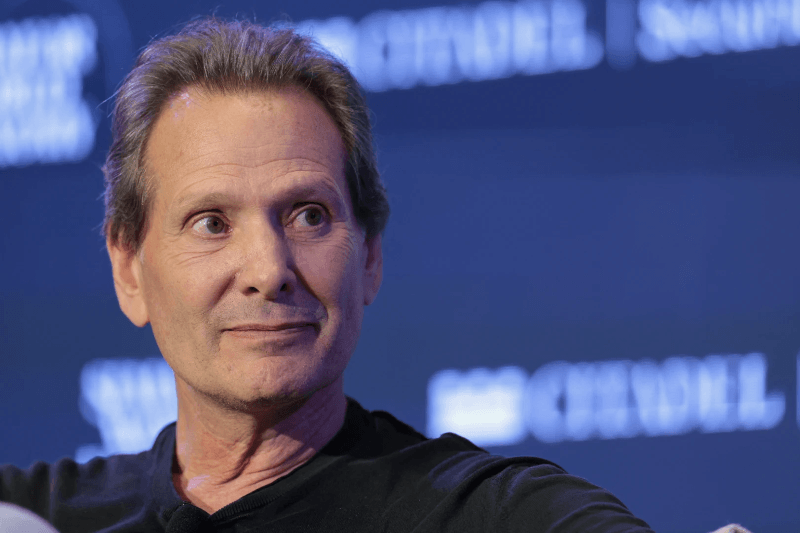

This year, James Wise – a trustee of the thinktank Demos and a partner at the venture capital firm Balderton – received a link to a video of himself, passionately talking about why he had invested in a new technology company.

The video showed him speaking enthusiastically about his great faith in the company’s leadership, while encouraging others to try out their service. But the problem was – Wise had never met the company or tried any of its products.

The video looked and sounded like him. But in reality, the two weren’t the same persons. In fact, it was an AI-generated fake video. Far from impressing him, the video actually left him concerned about the different ways these new tools could help fraudsters.

Keep Reading

End Products Are Incredibly Lifelike

From phishing attacks to data breaches, cybercrime is already one of the most commonly experienced crimes in the UK. The country last year saw the highest number of cybercrime victims per million internet users worldwide.

“In part we are victims of our own digital success,” Wise says. Brits have always been fast to adopt new technologies. But as AI grows to become more sophisticated, fraudsters are being given newer ways to trick people into believing they are someone they are not.

While the US-based ElevenLabs has released a tool that can almost perfectly replicate any accent, Synthesia from London goes a step further, allowing customers to generate new salespeople. The end products are incredibly lifelike, but the person behind it all doesn’t exist.

Some Tools Arriving To Tackle This Challenge

ElevenLabs has made the rules about using and misusing their technology very clear. They explicitly state that users cannot use the clone voice feature for fraud, hate speech, discrimination or any form of online abuse. But there are less ethical companies as well.

In this digital age, how will a parent know a video call from their child asking for emergency funds isn’t real? Moreover, how should you respond to a voicemail that sounds like it’s from your company, when in reality it might not be from there? These questions are not hypotheticals anymore.

Fortunately, some services are arriving to combat this challenge. Just as quickly OpenAI’s ChatGPT was adopted by some students, tools like Originality.ai were released to help teachers trace the source of a piece of content.